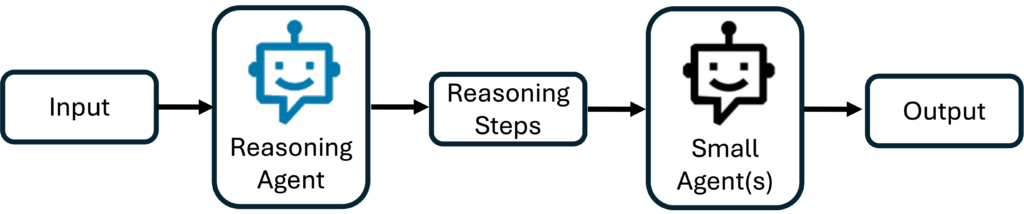

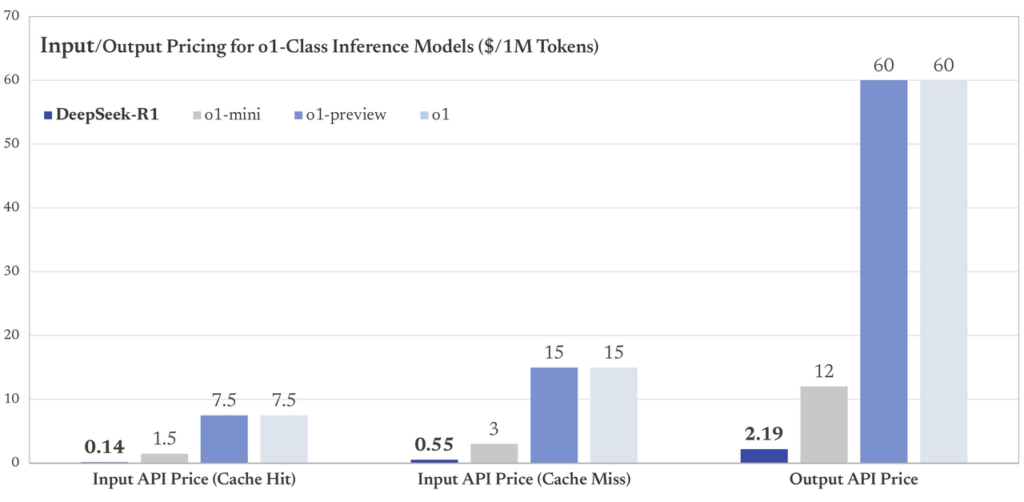

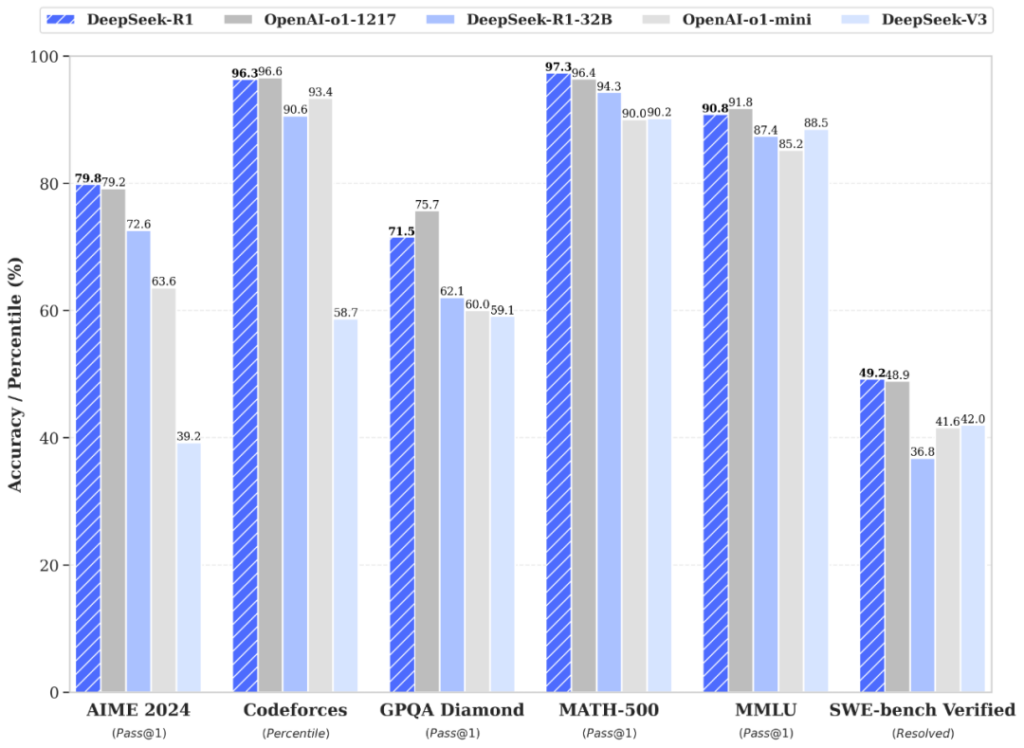

The AI industry stands at a crossroads. On one side, powerful models like GPT-4 dazzle with their capabilities but drain budgets with eye-watering costs. On the other, smaller models promise affordability but lack the sophistication to handle complex tasks. Developers and businesses are caught in the middle, forced to choose between overspending or underperforming. Enter **DeepSeek R1**, an open-source large language model that shatters this compromise. By reimagining how AI systems collaborate, it delivers high performance at a fraction of the cost—without sacrificing intelligence. The core challenge lies in the economics of AI. Pricing for models varies wildly: a single API call to a premium model can cost hundreds of times more than a budget alternative. But most workflows aren’t monolithic. A task like analyzing a customer review might involve sentiment analysis, keyword extraction, and summarization—steps that don’t all require the same computational firepower. Yet traditional systems default to using a single expensive model for every step, inflating costs unnecessarily. This inefficiency is compounded by the manual effort of stitching together specialized models, which adds complexity and slows development. Since the release of O1 by OpenAI, the AI industry has embraced the concept of **reasoning-driven delegation** but quickly ran into the high costs associated with O1. DeepSeek builds on this idea and solves the cost barrier by offering a far more affordable approach. Instead of treating tasks as monolithic blocks, it acts like a strategic project manager: breaking problems into logical steps and assigning each to the most cost-effective model. Routine tasks are handled by lower-cost options, moderate challenges go to mid-tier models, and only the toughest problems are escalated to top-tier experts. By automatically optimizing for both accuracy and expense, DeepSeek combines the power of reasoning-driven delegation with a budget-friendly price point.

Take the example of counting letters in the word “strawberry.” A traditional approach might use a high-end model to process the entire task, burning resources on a problem that requires minimal reasoning. DeepSeek R1, however, first generates a step-by-step plan: identify all characters, filter for “R,” tally the results. It then delegates the execution—data extraction and counting—to a lightweight, low-cost model like GPT-3.5 Turbo or even Small Language Model (SLM) like Phi series by Microsoft. The expensive reasoning model is only used once, while cheaper models handle the repetitive work. This approach isn’t just theoretical. Benchmarks show DeepSeek R1 reducing costs by **78% compared to OpenAI’s O-1 model** (Figure 2), a figure backed by transparent pricing comparisons.

But the innovation doesn’t stop at cost-cutting. DeepSeek R1’s open-source architecture invites developers to build custom ecosystems. If a new model emerges—say, an ultra-efficient code generator or a domain-specific medical analyzer—it can be seamlessly integrated into the workflow. This flexibility is revolutionary for startups and researchers, who often lack the budgets to experiment with cutting-edge tools. A small team could start with a single execution model, then expand their toolkit as needs grow, all without overhauling their infrastructure. The open-source nature also fosters transparency: developers can audit why DeepSeek R1 chose a specific model for a task, fine-tuning the logic to match their priorities. Consider the implications for industries like customer support. A typical workflow might involve classifying tickets, extracting key details, and generating responses. With DeepSeek R1, the reasoning model identifies urgent issues requiring human-like empathy (assigning those to premium models) while routing simple FAQs to cheaper alternatives. This dynamic allocation ensures businesses aren’t paying GPT-4 prices to answer “What’s my account balance?”—a question a $0.0001-per-call model could handle. Similarly, in data analysis, DeepSeek R1 could decompose a report into extraction, trend identification, and visualization, assigning each step to models optimized for speed, accuracy, or cost. Critics might argue that delegating tasks introduces new risks. What if the reasoning model makes a flawed plan? Or if a cheap model fails on a critical step? DeepSeek R1 addresses this through rigorous validation. Its reasoning process isn’t a black box; developers can review and adjust the logic, much like editing a project manager’s task list. Additionally, the model’s training emphasizes error detection, allowing it to flag uncertain outputs and reroute tasks as needed. It’s a self-correcting system, designed to balance autonomy with oversight. The environmental impact of this efficiency can’t be ignored. Training and running massive AI models consume vast amounts of energy, contributing to carbon footprints. By minimizing reliance on oversized models, DeepSeek R1 reduces computational waste—a win for both budgets and sustainability. This aligns with a growing industry trend toward “green AI,” where efficiency isn’t just about speed or cost but also ecological responsibility. Looking ahead, DeepSeek R1 signals a broader shift in AI development. The era of monolithic models isn’t over, but their role is evolving. Instead of being the default solution, they’ll serve as specialized tools within modular ecosystems. Imagine a future where developers assemble AI workflows like Lego blocks, combining reasoning models, domain-specific executors, and real-time validators. DeepSeek R1’s open-source framework is a stepping stone toward that vision, proving that collaboration between models can outperform solitary giants. For businesses, the stakes are clear. As AI adoption grows, so does competition. Companies that leverage cost-efficient systems will outpace rivals burdened by bloated AI expenses. A mid-sized e-commerce firm, for example, could deploy DeepSeek R1 to handle product inquiries, using cheaper models for inventory checks while reserving advanced models for personalized recommendations. The result? Faster response times, happier customers, and margins protected from AI’s hidden costs. The true power of DeepSeek R1 lies in its democratizing effect. By lowering barriers to entry, it empowers startups, researchers, and nonprofits to innovate without financial handcuffs. A student building a climate prediction tool can access the same strategic delegation as a Fortune 500 company. This inclusivity accelerates progress, ensuring the next breakthrough in AI isn’t confined to well-funded labs but emerges from a global community of thinkers and tinkerers.

In the end, DeepSeek R1 isn’t just a technical marvel—it’s a challenge to the status quo. It asks developers to rethink not just how they build AI systems, but why. Is the goal to chase benchmarks with ever-larger models, or to create solutions that are intelligent, affordable, and accessible? The answer, increasingly, is the latter. As the AI landscape matures, efficiency and practicality will define success. With DeepSeek R1, that future isn’t a distant promise—it’s here, and it’s open for everyone to explore.

Ready to transform how you build AI? Dive into DeepSeek R1’s [documentation](https://api-docs.deepseek.com), experiment with its open-source framework, and join a community redefining what’s possible—without breaking the bank. The next era of AI isn’t just smarter; it’s smarter *and* sustainable. Let’s build it together.

Source for figures: [Deepseek](https://api-docs.deepseek.com/news/news250120)

S.A.M

Shady Mohammed is a seasoned AI/ML Cloud Specialist and Cloud Solutions Architect with over 10 years of experience spanning engineering consulting, cloud computing, and solution architecture. With a Ph.D. in Data Analysis and Applied Analytics, Shady has led more than 15 CPG projects and holds three U.S. patents in applied AI. His expertise includes designing scalable AI models, optimizing cloud infrastructures, and leading cross-functional teams to deliver innovative solutions for federal and private sectors. Shady's commitment to leveraging emerging technologies and aligning them with business objectives ensures impactful results and continuous improvement.